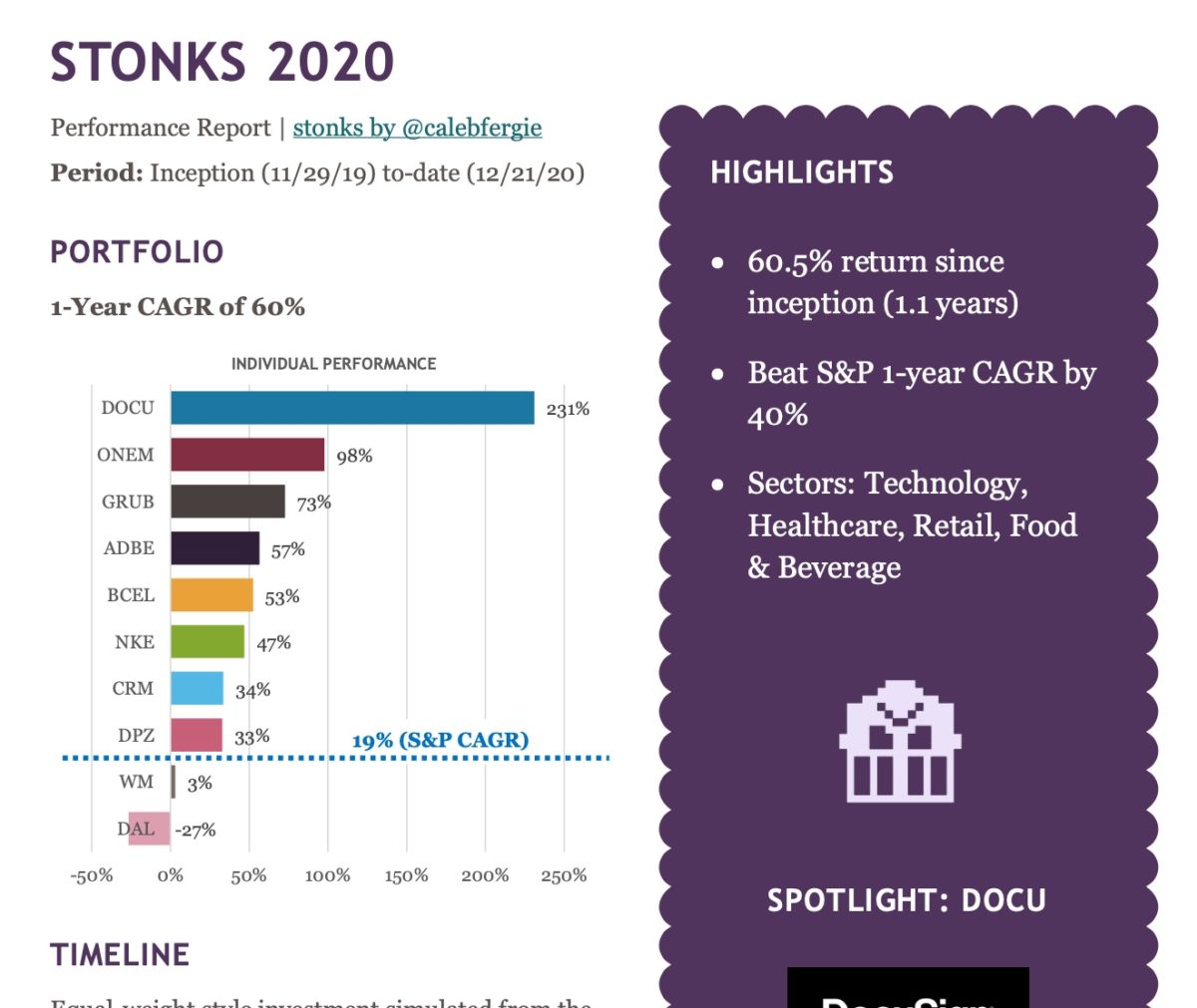

Annual simulated performance report of my totally unfounded. unsolicited stock picks.Continue readingSTONKS: Where are they now?

Tag: data visualization

ConEd Energy Usage Data

Daily energy usage data from ConEd website – my apartmentContinue readingConEd Energy Usage Data

Inspired by this video of eye tracking while playing video games, I used a Pupil Labs’ pupil tracker while playing…Continue readingPupil Tracking with Rocket League

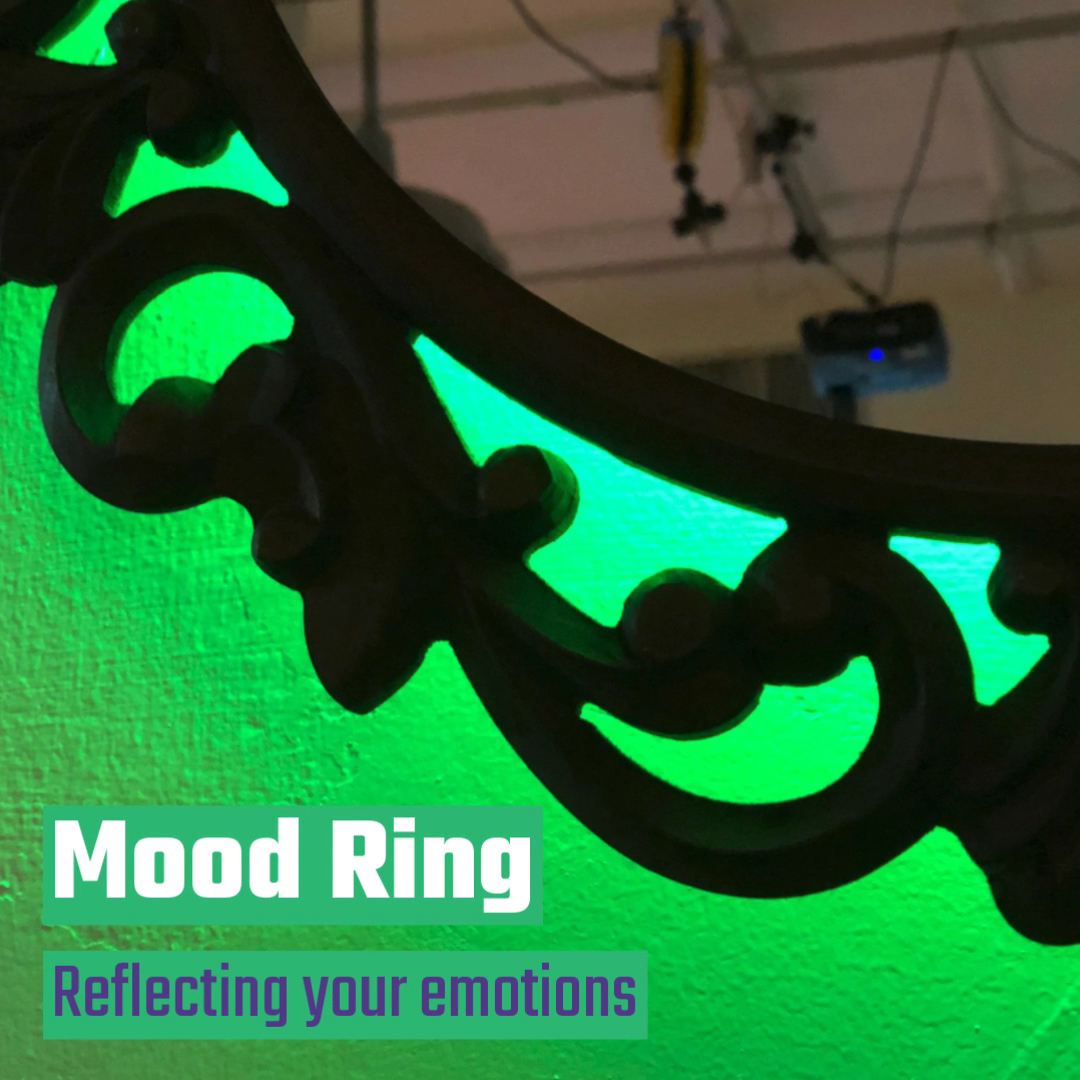

Mood Ring is an electric mirror that uses computer vision to light up based on your mood.Continue readingMood Ring

Classmate Michael Blum and I have developed a project proposal for a public intervention project that utilizes data art/visualization called…Continue readingData & Publics: Invisible Crowds

Building upon my artist lyrics analyzer project, I made a small web app that searches artist lyrics for the colors that they mention…Continue readingThe Color of Music

Using data from the musixmatch API, I have created a tool that shows what words musical artists use most in…Continue readingLyrics API: Artist Word Choice

The instant an Arduino or Raspberry Pi connects to the web (with a public IP) it is out there for…Continue readingCybersecurity Report: Connected DIY Devices

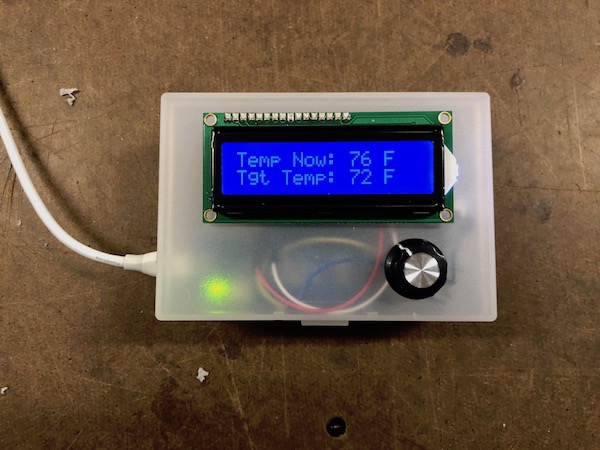

The connected thermostat I was building earlier is now complete! 🌡🌡🌡 This thermostat works like a Nest Thermostat (though clearly…Continue readingA Simple Connected Thermostat

Over the past few weeks I have been working on my first ‘patch’ – an interface for controlling an audio-visual…Continue readingI’m a VJ now